Our new paper, Reality Bites: Making Realist Evaluation Useful in the Real World, written by members of the Learning Group1, reflects on our experiences and distills what we’ve learned along the way.

What’s different about realist evaluation?

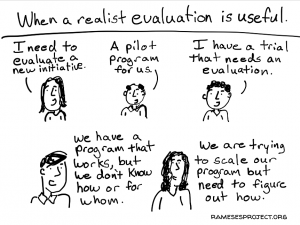

Realist evaluation is a theory-based approach, which asks ‘how and why do interventions work or not work, for whom, and in what circumstances?’ What sets it apart from other evaluation approaches is the particular set of assumptions it makes about programmes and the nature of reality, causality and evidence grounded in a realist philosophy of the world – discussed further in our paper. This has implications for how an evaluation is designed and conducted, and what the results look like in the end. Wrapping your head around these principles is the first challenge facing those new to realist evaluation.

Itad’s realist evaluation of the UK’s International Climate Fund developed nuanced findings about how and why DFID programmes could be designed to deliver additional climate adaptation or mitigation impacts. This helped inform a review by the Independent Commission for Aid Impact (ICAI) of the UK’s climate finance, and helped DFID identify how they could support programme teams to understand climate risks and opportunities relevant to them.

Why does realist evaluation add value?

In our paper, we argue that realist evaluation can enhance the clarity, depth, and portability of evaluation findings, provide practical ways to grapple with context and complexity, and generate valuable learning opportunities for commissioners and implementers when meaningful stakeholder engagement is built in. Across the evaluations discussed in the paper, a realist approach has helped us generate actionable findings that have fed into learning and decision making for programme implementers and funders.

How can we make realist evaluation work in the ‘real world’?

Through trial and error, we’ve identified lessons we hope may help others grappling with realist evaluation and how to make it work in the ‘real world’ (that is, in large evaluations of complex programmes, within international development and beyond).

- Set boundaries early: Realist evaluation is analytically demanding, and it is rarely possible to look at all dimensions of a complex programme. It can add most value in helping unpack interventions and change processes that are least well understood. Across our evaluations, we could have saved a lot of time by building in early processes to negotiate boundaries and set priorities with stakeholders. Where time and resources are in short supply, it may be more appropriate to use realist evaluation as one component within a larger evaluation, investigating a few aspects of a programme or change processes that are particularly interesting or crucial.

- Keep it simple (at least at first). Context-mechanism-outcome configurations (CMOs) are one of the unique aspects of a realist evaluation. They are theories about how specific contexts (and intervention factors) interact with causal mechanisms to generate outcomes within a programme. When used effectively, they can provide nuanced and generalisable insights into how and why interventions work. However, we often spent too much time developing complicated CMOs too early, before we understood the intervention in sufficient depth. We suggest developing rough theories early on, rather than detailed ones which may later prove to be irrelevant. A lot of time can be lost agonising over the precise formulation of a CMO – it helps to view them as flexible rather than rigid framework. Finally, it is often a good idea to avoid realist jargon (like ‘CMO’!) as much as possible when engaging with non-evaluators and when writing up reports.

- Factor in time for iterative activities and stakeholder engagement when planning your budget. Underestimating the time required for evaluation is a common challenge, but a particular risk in realist evaluation. This is partly because of the need for iteration – theories are revised, tested and revised again, potentially bringing in new methods and tools to test evolving hypotheses. We consistently underestimated how long it would take to revise theories, revisit the literature, understand and investigate emerging issues and priorities, build implementer capacity, and maintain positive relationships with new evaluation managers over the course of multi-year evaluations.

- Engage stakeholders at the right time in the right ways. Timing is everything. Realist evaluation has the potential to be hugely valuable where evaluation activities can be aligned with programme decision points, while taking care to avoid ‘theory fatigue’ among busy programme staff.

With time, effort, and committed commissioners and implementers, realist evaluation can provide deeper understanding which can lead to better policy – it can be hard work, but it is worth it!

If you want to hear more, watch the recording of the CDI webinar, where we presented our paper in March 2020.

Find the paper on the IDS website.