When I worked in Bangladesh in the mid-90s there was always a steady flow of project and programme workshops going on in Dhaka. One individual always turned up; he became known as ‘Mr Workshop’ and we eventually realised he’d just figured out a good way to get a decent lunch several times a week.

At the end of November, I went to four evaluation symposia in 10 days, and am starting to worry about it becoming a free-lunch habit too. It’s obviously evaluation season since the American Evaluation Association’s annual conference was also running at the same time (Itad at American Evaluation 2017).

Across these symposia, there’s been a really interesting cross-section of evaluation theory and practice. These four events were: Evidence that matters for vulnerable and marginalised people in international development, part of 3ie’s London Evidence week; the Centre for Evaluation of Complexity Across the Nexus (CECAN)’s International Symposium on Complexity Approaches to Evaluate Global Nexus Policy Challenges; the Centre for Development Impact (CDI)’s event on The role of evidence in a changing world; and the Centre for Excellence on Development Impact and Learning (CEDIL)’s symposium on Timely Evaluation for Programme Improvement.

The benefit of having a compressed cross-sectional view of evaluation from four symposia is being able to reflect on and observe what is happening in our space and, with the exception of the CECAN event, they all centred on evaluation in international development.

Reflections

There was healthy attention paid to the usefulness of evaluations. The ‘to whom’ question didn’t always get well addressed – it was usually to ‘policy-makers’ or ‘decision-makers’ (terms that really need unpacking), and sometimes to ‘society’ and ‘stakeholders.’ There was (mostly) recognition that a good evaluation doesn’t end with a good report – it’s barely started being useful then.

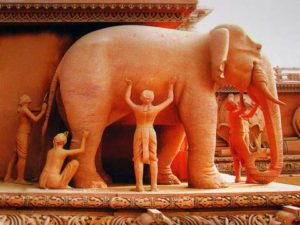

These events covered some big topics and attracted wide audiences. I observed a certain amount of finding out what the elephant looks like. Complexity is always a good topic to get a mix of views, and, as ever, different methodological perspectives bring a range of views about the nature of the evaluation beast.

Some impressive use of digital technology was presented. At the CEDIL event, Martin Dale (PSI) gave a fascinating talk on adding a social media narrative component to the DHIS 2 health management information system to improve the analysis and use of routine data. And at the CECAN event, Sander van der Leuw presented on the Decision Theatre at Arizona State University, used to encourage researchers and leaders to visualise solutions to complex problems.

But mostly good old-fashioned field-work caught my eye. Charlotte Watts talked about her evaluation of the SASA! programme’s work on violence against women and girls (VAWG) in Uganda, Val Curtis on behaviour centred-design research on toilet use in India, and Jean Boulton on her complexity-oriented impact assessment of financial sector deepening in Western Kenya. Not only did this make for more interesting presentations, but was also a good demonstration that in the complex arena of trying to understand what works to get human beings to do something differently, a keen understanding of context is necessary. In my first position after graduating, conducting agricultural research, my supervisor said; ‘if you want to trust your data, never visit the field.’ I think he was kidding…

Symposium formats

I must confess to being in danger of contracting PowerPoint Poisoning. Kudos to Claire Hutchings from Oxfam for good illustrations and a low text to image ratio; Owen Barder at the CDI event for presenting with simply with two tabloid front pages and no PowerPoint, and David Byrne at the CECAN event for a 10-minute mini-master class in qualitative methods, with no visual aids.

The ‘three-person panel; 15-minute presentation each; 10 minutes for Q&A’ format is over-used. On the plus side, I heard some really fascinating talks, and a good number really stimulated my thinking. But the opportunity to engage further was often limited and dictated by the clock. CECAN should be congratulated on trying a wider range of approaches, such as two-minute presentations, extended group work sessions, and participants ‘touring’ recurring presentations. At many universities, undergraduates are generally able to access slides for their lectures in advance of the lecture itself, through their institution’s Virtual Learning Environment. I feel the depth of engagement in symposia would benefit from participants having similarly had access to the material in advance. Finally, a brave and innovative attempt at the CEDIL event to use tweets to distil the essence of break-out groups into 140 characters; maybe a bit of a victory of form over content?

And so, to 2018. I’m currently involved in organising the 2018 UK Evaluation Society conference, so I hope we can take some of our own medicine in designing that!